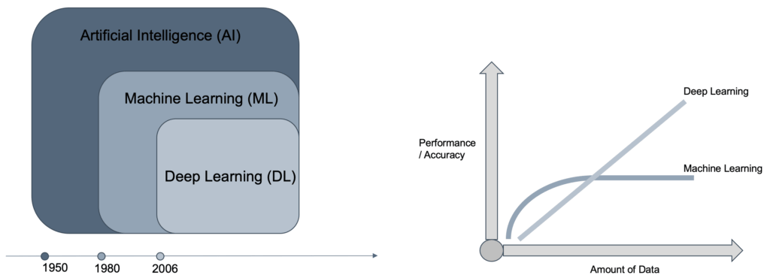

As we wrote in our first part of the tech blog series “AI in Cybersecurity”, the term Artificial Intelligence is currently ubiquitous. But many do not know the difference or the delimitations between Artificial Intelligence (AI), Machine Learning (ML) and Deep Learning (DL). Therefore, it is beneficial to define some terms and concepts as strictly speaking they are often used as the same although their meaning is different.

Artificial Intelligence

AI is a part of computer science which deals with the acquisition of cognitive skills. In this regard, a computer or software shows a human-like ability to adapt to different environments and tasks and transfers knowledge between them. But our understanding is that it is – according to this definition – currently not existing although the term is often used. Nevertheless, AI has subareas which are available and already applied for use cases today:

Machine Learning (ML) provides computers with the ability to learn without being explicitly programmed and offers various techniques that can learn from and make predictions on data. Deep Learning (DL) has become feasible since the last few years, and there are some developments which make it a useful option on a large enough scale like the new amount of available data, new storage technologies that can save significant amounts of data and by the enforced compute power as it can process massive this amount of information. Also, the availability of AI frameworks (such as provided by Amazon Web Services and Google Cloud Platform) provides even non-data scientists with the ability to develop AI-based solutions, even in the Cybersecurity area.

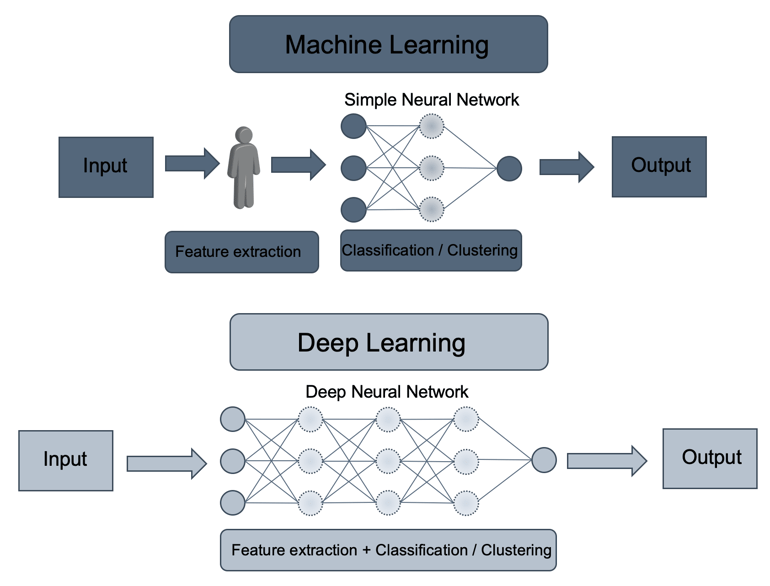

In direct comparison between DL and ML, DL shows significant advantages concerning performance and accuracy, and it can handle a much larger amount of data. But what is the reason for it? Two significant advantages are coming with DL as it does not require manual feature engineering, and it uses multiple layers. Human and manual feature extraction is impossible considering the massive amount of unstructured data and in cases where security analysts and engineers do not have the knowledge about which characteristics are relevant to detect threats due to the enormous potential number of the attackers’ behaviours. As you can see in the image below, DL consists of one input, one output and multiple fully-connected hidden layers in-between. Each layer is represented as a series of neurons and extracts higher-level features of the data until the final layer essentially decides what the input shows. The more layer the networks have, the higher-level features will be learned.

The different layer provides the ability to analyse datasets differently (random subset, reduction, etc.). Each layer transforms input data into abstractions. In other words, it puts different views on data and averages results. The output layer combines those features to create predictions. As an analogy, you can imagine that each layer represents one person and follows the principle that more people together leads to better results. DL comes into play when the desired objective requires analysing a massive number of factors linked by unknown and complex interrelationships.

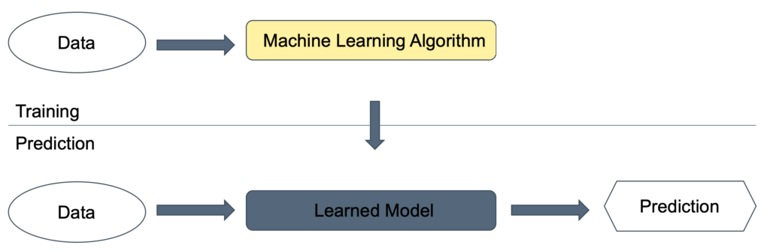

Machine Learning – training and prediction phases

The ML process consists mainly of the two phases of training and prediction. First, in the training phase, the ML algorithm is feed with specific datasets as input. The ML algorithm creates a learned model which then allows the predictions on input data. It is important to highlight that the data used for learning cannot be used in the prediction phase to verify if the learned model is working correctly because the model will not produce reliable outputs on data which has already been used to train the model. It is therefore advisable to split existing datasets for the training and the evaluation of the model.

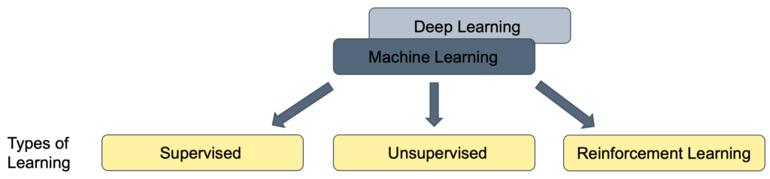

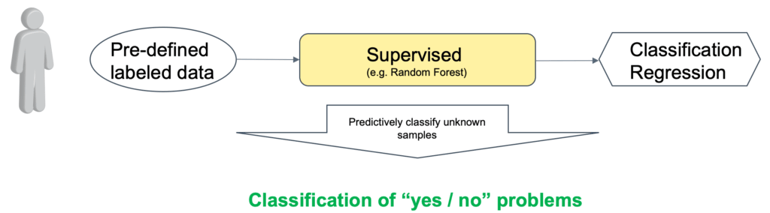

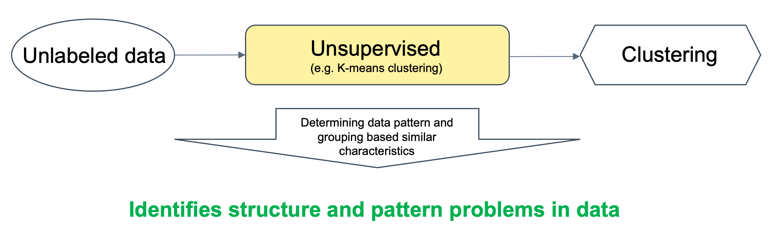

The following image shows the three different types of learning (Training) with Supervised, Unsupervised and Reinforcement Learning. The main difference is the type of problem each method can solve, in general, but also applied to cybersecurity.

Supervised learning

The problem which is being solved by supervised learning is called classification, where data is classified as “yes/no” problems. In the context of Cybersecurity, it means that we need to classify if input data is benign or malicious.

A prerequisite for the training phase is that an engineer needs to feed data examples for malicious activity into the learning algorithm. Thus, malicious data need to be known to train the model. As global security experts do not know about benign and malicious activities in local networks, supervised learning is often applied in a global context. Here Internet-based activities and files are analysed continuously, and malicious fragments are extracted. With this information, the prediction model is updated regularly. Common Cybersecurity use cases are sandboxing systems and securing Internet-based communication.

Unsupervised learning

In cases where the malicious activity, the character and structure of malicious data, is unknown unsupervised learning can be ued to identify the structure and pattern problems in data (clustering). In Cybersecurity, Unsupervised learning is applied when there is no information about if data is benign or malicious. The basic concept is to find logical groupings of data (local norms) and identify deviations (outliers) from those norms. So it can be applied in a local context, and it is suited for local networks.

Reinforcement learning

Last but not least, Reinforcement learning provides the ability to adjust the model based on a feedback loop like a reward. It can be understood as an addition to supervised and unsupervised learning, but it can also be risky to retrain the model especially in the context of Cybersecurity.

With this theoretical background, the next blog post “Use Cases of Artificial Intelligence in Cybersecurity” will explain some selected AI use cases in Cybersecurity and their prerequisites and impacts for the security architecture.

Share your opinion with us!

Leave a comment using the form below and let us know what you think.

.png?width=300&height=300&name=Design%20ohne%20Titel%20(7).png)